A decade of Bioinformatics London: A Conversation with Paul Agapow

Date Posted: Tue, 31 Mar 2026

I do mathy, dry computery things to squishy, wet living things.

That’s how Paul Agapow describes his work, which is one of the most memorable and yeah, I get it ways of describing what we’re doing in this field.

He’s someone I’ve known for a while now and an absolute fountain of knowledge, which is why he’s best known (at least in the capital) as one of the people behind Bioinformatics London – a meetup group that’s been running for over a decade and bringing together people who work across biology, data and computation.

The group is some 1,600+ members large, and each month they meet to hear a short talk from a figure within industry, followed by a long, less structured discussion at a London pub.

If that sounds like your thing, information on how to join the group is at the bottom of this piece.

We recently spent some time talking about how the group came about, how the space has changed over the last 10 years, and what people working in and around BioAI and at these discussions are speaking about. Here is an overview of what we discussed.

A spotlight on Bioinformatics London, and Paul Agapow’s commentary on the industry.

What originally motivated you to start Bioinformatics London?

I have to concede the original inspiration to my co-founders Mark Bartlett and Nathan Lau. But the feeling we all shared was one of frustration and isolation.

We were working at the intersection of computation and biology – which was becoming increasingly essential to biomedical research – but we were also profoundly siloed. Most people worked alone as the sole “computer guy” in a group of biologists, an army of one for analysis problems of any type.

There was another layer of siloing on top of that. Academia rarely talked to industry; statisticians weren’t talking to software engineers; immunologists didn’t see useful tools that plant scientists were developing.

London had this enormous concentration of talent, but no mechanism for people to run into each other and have those casual, serendipitous conversations that can be so important. Bioinformatics London was an attempt to create a third space for exactly that.

Did you expect the group to still be running 10 years later?

Frankly, no. Not that we expected it to fail, but like most people starting a meetup, we were just hoping it would survive its first three sessions.

What surprised me and continues to surprise me is that the need for it hasn’t diminished. If anything, as the field has grown more complex and more interdisciplinary, the appetite for informal knowledge-sharing has grown with it.

When you’re working at the cutting edge, the literature runs 12–18 months behind practice and the hype signal threatens to drown out the noise. Communities like ours become a kind of combination filter and early warning system: what’s actually working, what can you safely ignore.

What do you feel has changed the most within this space over the past decade?

It’s difficult to communicate to newcomers just how computational approaches to biological problems used to seem a little disreputable, something that wasn’t “real science” and was just tolerated.

I remember attending a job interview, where the academic panel complained loudly about the need for bioinformaticians and how, surely, in a few years, this would all die out or somehow be taken care of. Bioinformatics London was founded around the time when a great sea change occurred, and biomedical research had to become computational.

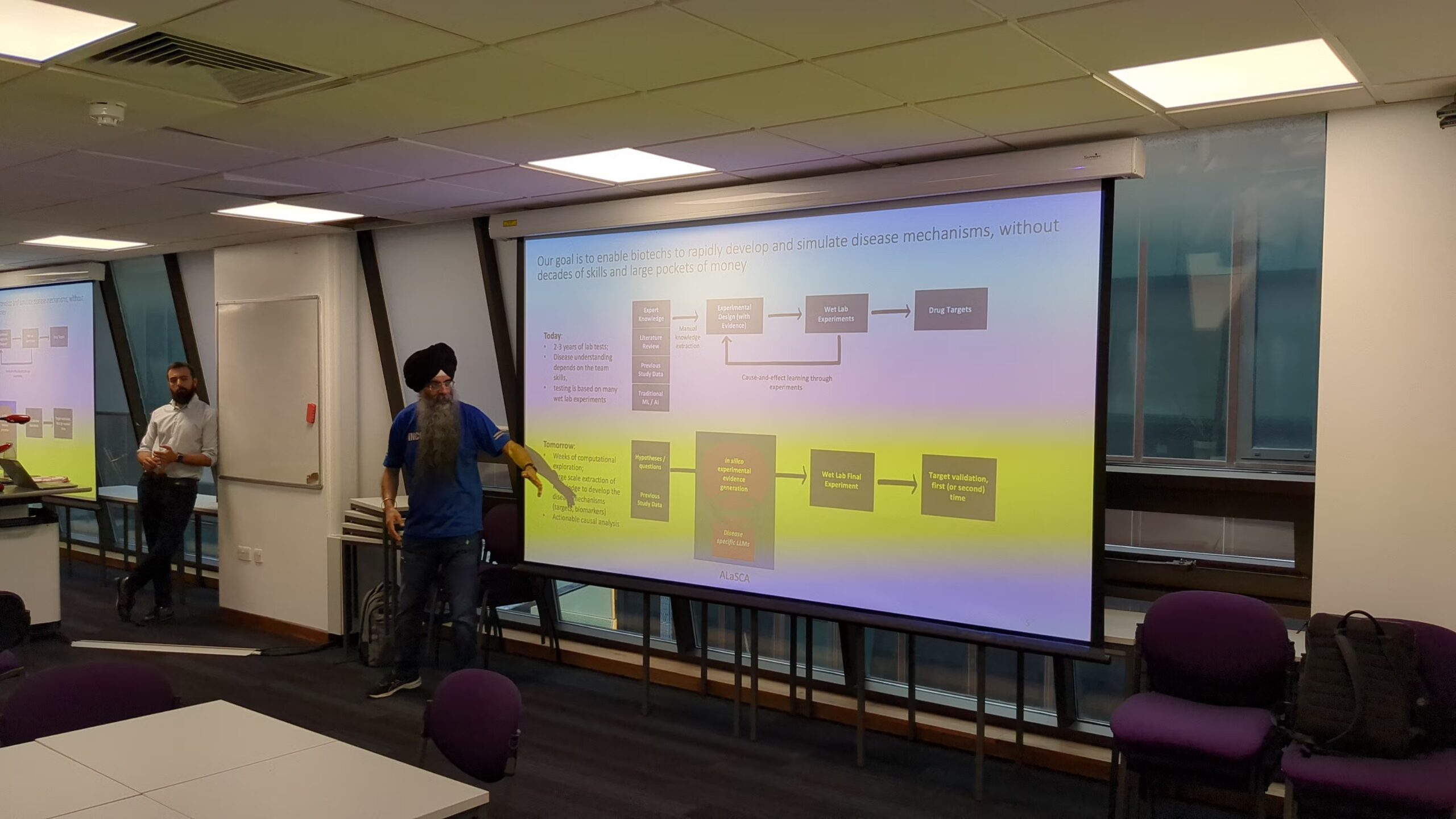

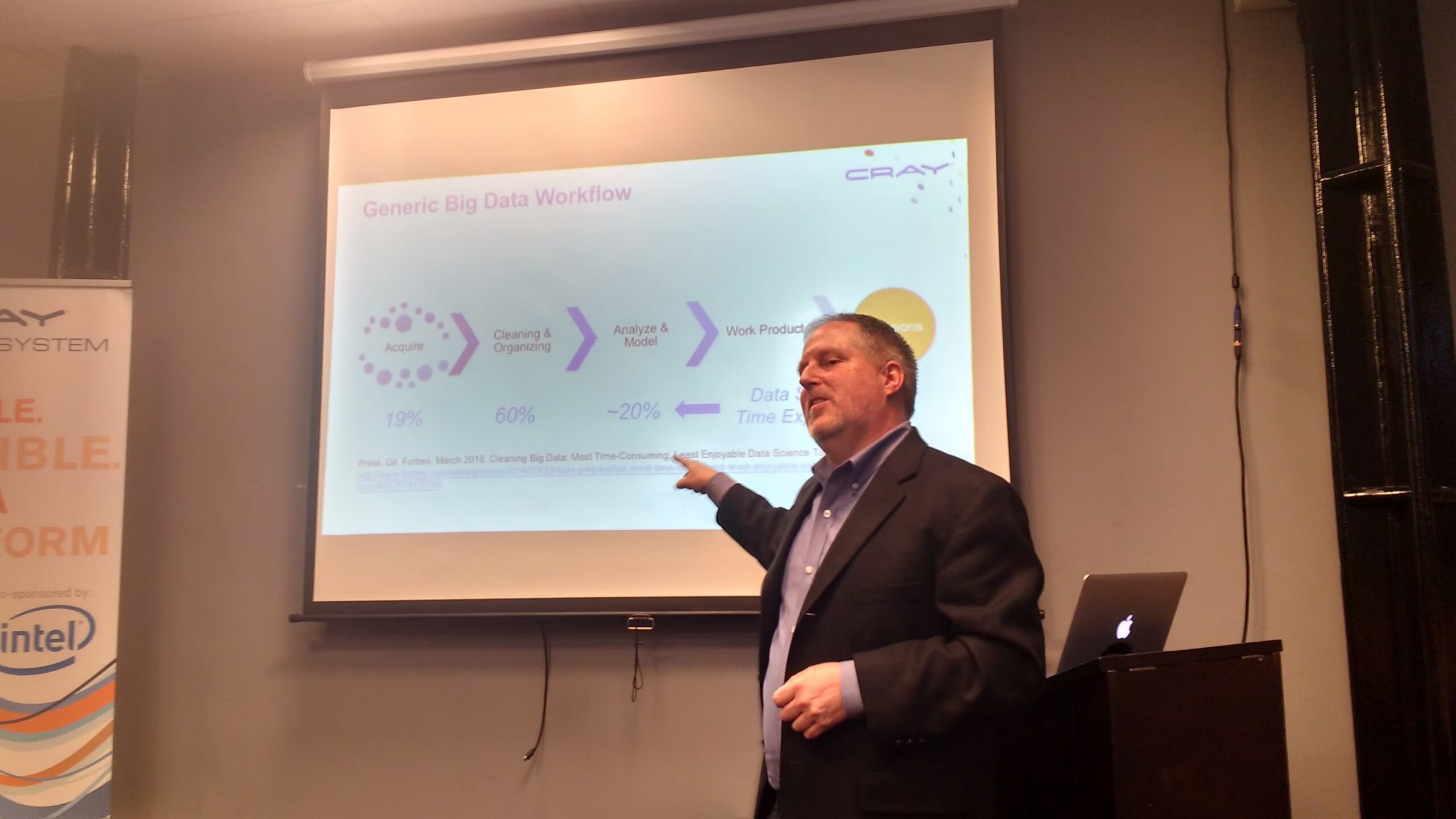

What’s also changed is the scale and the tooling to match it. In 2016, the computational challenges were immense even for relatively modest datasets. Labs would boast of their “pipelines” (largely very shaky shell scripts) running over megabytes of data. Now we routinely discuss foundation models trained on pan-cancer genomics, spatial transcriptomics at single-cell resolution, and multimodal integration of imaging, clinical records, and omics. It’s breathtaking.

But perhaps the more profound change is who is in the room. The field used to be dominated by people in academia, often self-taught, with PhDs in statistics or genetics. Now we’re dominated by industry and you routinely see software engineers, data scientists, people with postgrad qualifications in biomedical analytics and AI, clinicians who’ve taught themselves Python.

In your opinion, what role do informal communities like this play in scientific and technical progress?

A much larger role than they’re given credit for. Formal institutions – journals, conferences, universities – are essential, but they’re inherently slow and come with their own biases and interests.

By the time something becomes a paper, it’s already been through years of review. By the time it becomes a conference talk, the speaker has refined it into something polished. What you lose in that process is the texture of how people are actually thinking and working: the wrong turns, the half-formed intuitions, the “we tried this and it didn’t work.”

That texture is enormously valuable. Informal communities are where it can live. They’re places where people have permission to be uncertain – and I think that’s deeply underrated as a mechanism for learning.

Do you think the term “bioinformatics” still captures what the field has become?

I do not. Actually, I’ve never particularly liked the word.

The term was never well-formed; it just congealed. It was coined in an era when the dominant challenge was sequence alignment and genome annotation, and metastasised to encompass everything done using a computer on biological data. People would occasionally try to codify or reason their way into definitions or what the field’s boundaries were, but no one could ever agree. So it started as a grab bag term for genomicists, molecular evolutionists, computational chemists, and has ended up even broader: multi-omics integration, causal inference from observational health data, large language models applied to protein structure and clinical text, AI-driven trial design…

I don’t think this has been good for the field. It allows you to bundle together all those diverse fields with diverse needs and methodologies and throw them in a drawer labelled “computer stuff”. But it’s not to do with computers, they’re just a means to an end. It’s to do with data and how we use it to understand biology. I’d love to change the group name and labelling, but people are very attached to “Bioinformatics London” as a brand. Let’s see if I win that argument.

Can you give an insight into some of the most recent, exciting discussion points?

There’s always a new New Thing. AI is front and centre, of course, but what’s striking is that bioinformaticians are increasingly convinced it’s actually useful – not. Foundation models in particular are dominating the conversation, and there’s a growing sense that they genuinely help us grapple with complex, high-dimensional biological data. These systems don’t have to be perfect; they just have to be better than the baseline, and they are.

There’s also considerable excitement about agentic AI – arguably running a little ahead of our ability to deliver on it – but the promise is real: automation, literature synthesis, document generation, taking care of a great deal of the drudge work that consumes scientific time.

Each new ‘omic technology goes through what I think of as its “wild west” phase: the technology is shifting rapidly, there are no best practices, and comparing results across labs is difficult or impossible. Transcriptomics went through it. Proteomics went through it. Spatial transcriptomics and single-cell technologies are still in that phase, but they’re already making exciting discoveries about tissue architecture and expression patterns that simply weren’t visible before.

On the clinical side, actual translation remains the real litmus test. The models exist – but can they survive validation, regulatory navigation, and embedding into real clinical workflows? There’s real momentum around multimodal models that reason across imaging, pathology, and electronic health records simultaneously. People with hybrid skills, who understand both the biology and the implementation constraints, are in enormous demand.

Two areas I’m personally most enthusiastic about: causal inference – real causal reasoning, not the shallow chain-of-thought that LLMs practise – because biological data is complex and strewn with confounders, and we need sophisticated approaches to pick apart cause from effect. And experimental design: many scientists are poorly equipped for it, and what if AI could propose series of experiments that close the loop between model and wet lab? That kind of active learning approach could help us iterate and learn dramatically faster.

Any final thoughts?

Bioinformatics London wouldn’t have survived this long without the efforts of so many people. I’ve mentioned my co-founders Mark Bartlett and Nathan Lau, but I also want to recognise Stephen Newhouse, who joined very early on and brought his own bioinformatics discussion group with him, and Andy Nuzzo and Manuel Corpas, who are ably leading the community now.

If you’re working at the interface of computation and biology – whether you’re a student just finding your feet or a seasoned practitioner – come find us. The conversation is always worth having.

If you’re based in the Southeast and in bioinformatics, biostatistics, biodata, biomedical analysis or genomics, Bioinformatics London is a group well worth joining.

New meetups can be found here, but you can find previous events on their old Meetup page, for a flavour of what the group discusses.

You can follow them on LinkedIn here.

And join their WhatsApp group here.

Written By:

Joe Phillips

Connect on LinkedIn